{'OVERVIEW'}

Adaptive Spaces is a top-down 2D game that explores how games can dynamically adapt to a player’s emotional state by combining behavioral gameplay data and real-time facial emotion recognition.

The system uses a multimodal fusion model to interpret player emotion and adjusts environmental elements such as lighting and gameplay pacing. The goal is to create a more responsive and immersive experience that reacts not only to player input, but also to player state.

ADAPTIVE SPACES ROOM OVERVIEW

{'KEY FEATURES'}

FACIAL RECOGNITION

Real-time facial emotion recognition.

BEHAVIORAL PLAYER MODELING

Keeps tracks of idle time, failures and progress as the player moves.

MULTIMODAL EMOTION FUSION SYSTEM

Fusing the player's movements and facial emotions seamlessly for the output

ADAPTIVE ENVIRONMENT

Lighting, gameplay pacing, camera FX

{'TECHNICAL IMPLEMENTATION'}

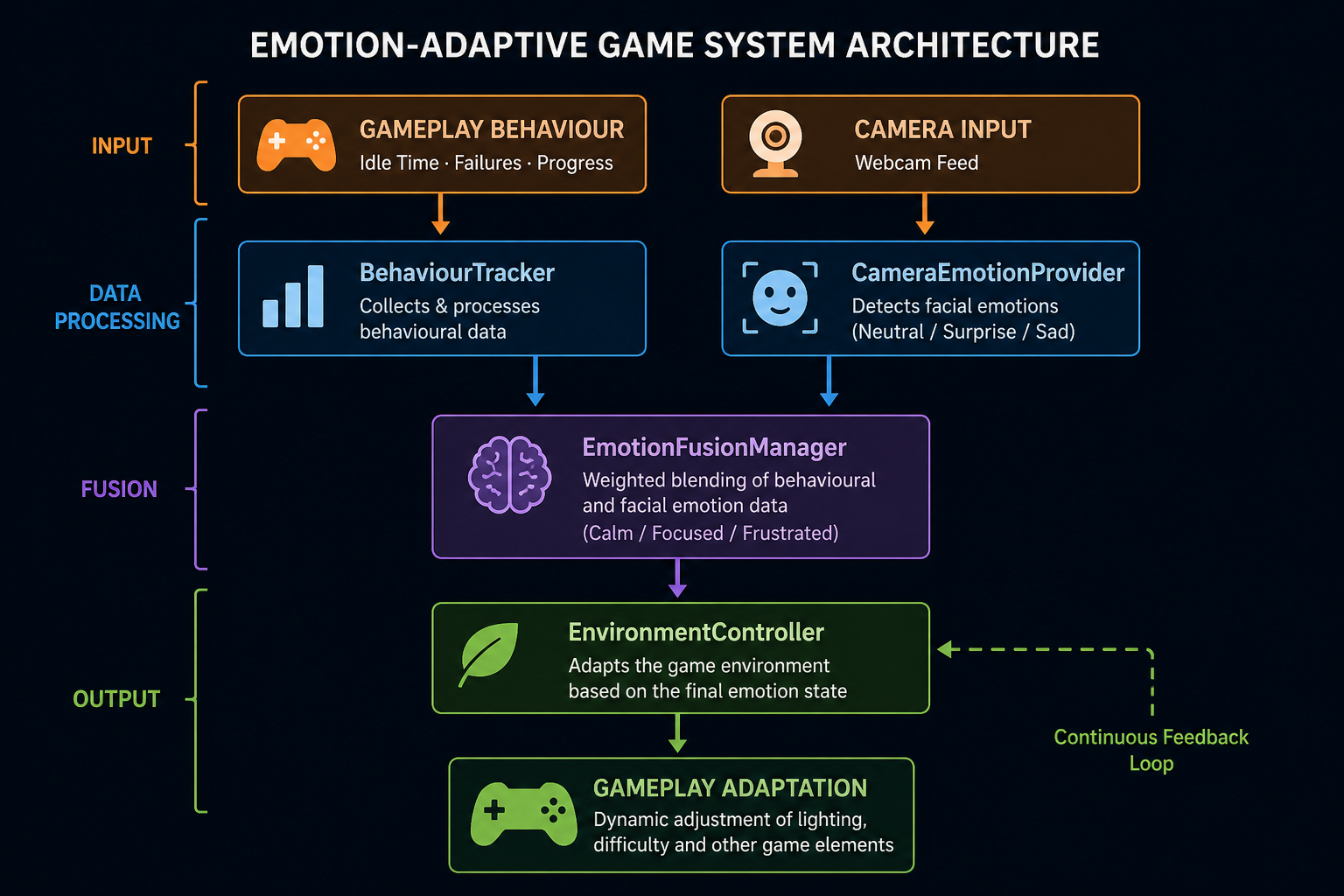

SYSTEM ARCHITECTURE

The system is divided into modular components responsible for data collection, emotion inference, and environmental adaptation. This separation allows for scalability and clear system design.

EMOTION MODEL & FUSION

The system combines two sources of emotional data:

- Behavioral Signals: Idle time, failures, progression

- Facial Emotion Input: Neutral, Surprise, Sadness

These inputs are mapped into three gameplay-relevant emotional states:

- Calm

- Focused

- Frustrated

A weighted fusion model combines both sources:

- Behavior weight: 70%

- Camera weight: 30%

ENVIRONMENTAL ADAPTATION

🎨 Lighting

Lighting temperature and color dynamically adjust based on emotional state:

- Calm → Warm, soft lighting

- Focused → neutral, bright lighting

- Frustrated → colder, harsher lighting

🎮 Gameplay Adaptation

Gameplay parameters subtly adjust to maintain engagement:

- Calm and Focus maintain baseline gameplay

- Frustration triggers slight assistance

METRICS & EVALUATION

To evaluate system stability:

- Average emotional state duration: ~2 seconds

- Reduced rapid emotion switching through cooldown logic

- Balanced contribution between behavior and camera input

These metrics helped ensure smooth and believable emotional transitions.

{'DEMO'}

FULL DEMO

{'CHALLENGES & SOLUTIONS'}

CHALLENGE: NOISY EMOTION DETECTION

Facial emotion data obtained from real-time inference showed significant fluctuations between frames, even when the user’s expression remained relatively stable. This resulted in rapid and inconsistent emotion switching, reducing the reliability of the system.

SOLUTION: SMOOTHING & CONFIDENCE-BASED INTERPOLATION

Temporal smoothing was introduced using interpolation between consecutive values and a confidence-based approach was applied to reduce the influence of low-certainty predictions. This significantly stabilized the emotion signals and improved the overall responsiveness of the system.

CHALLENGE: CONFLICTING EMOTIONAL SIGNALS

The system integrates both behavioral data (e.g., player performance and activity) and facial emotion input. In several cases, these two sources produced conflicting interpretations of the player’s emotional state, leading to ambiguity in determining the final emotion.

SOLUTION: WEIGHTED FUSION MODEL WITH ADJUSTABLE INFLUENCE

A weighted fusion model was implemented to combine both inputs in a controlled manner. Behavioral data was given a higher influence due to its consistency, while facial input contributed additional nuance. This allowed the system to resolve conflicts more reliably and produce balanced emotional outcomes.

CHALLENGE: ABRUPT ENVIRONMENTAL TRANSITIONS

Initial implementations caused sudden changes in lighting and gameplay parameters when emotional states shifted. These abrupt transitions negatively affected immersion and made the system feel artificial.

SOLUTION: INTERPOLATION ACROSS ALL ADAPTIVE SYSTEMS

All adaptive systems were updated to use smooth interpolation over time. This ensured gradual transitions between emotional states, resulting in a more natural and cohesive player experience. Temporal constraints were also introduced to prevent rapid oscillation between states.

{'DEVELOPMENT TIMELINE'}

WEEK 1-2: CORE DESIGN, GAMEPLAY AND MOVEMENT

Focused on implementing the core player movement system and establishing a basic gameplay loop within a room-based environment. This phase ensured that the project had a stable and functional foundation that could support more advanced systems in later stages.

WEEK 3-4: BEHAVIOR TRACKING AND EMOTION SYSTEM

Introduced a behavior tracking system to monitor player activity, alongside the integration of real-time facial emotion recognition. These inputs were then combined through an initial emotion fusion model, forming the basis for interpreting the player’s emotional state.

WEEK 5-6: ENVIRONMENTAL ADAPTATION

The system was extended to include dynamic environmental adaptation driven by the player’s emotional state. This included implementing responsive lighting and gameplay adjustments, with an emphasis on smooth and immersive transitions.

WEEK 7-8: POLISHING, TESTING AND PRESENTATION

Focused on improving system stability, handling edge cases, and refining the overall experience. The project was then prepared for presentation through debugging tools, and a recorded demonstration showcasing its functionality.

{'WHAT I LEARNED'}

This project provided practical experience in building a multimodal system that combines behavioral data and real-time facial emotion recognition. It highlighted the challenges of working with noisy and sometimes conflicting inputs, and the importance of techniques such as smoothing, weighting, and temporal constraints to ensure stable results.

It also reinforced the value of modular system design when integrating external tools into a larger application. Overall, the project strengthened my understanding of affective computing and how adaptive systems can enhance interactive experiences.

{'FUTURE IMPROVEMENTS'}

ADDITIONAL BIOMETRIC INPUTS

Add data such as heart rate or eye tracking to improve the accuracy and robustness of emotion detection.

MACHINE LEARNING-BASED ADAPTATION

Implement machine learning algorithms to improve the system's ability to adapt to individual players' emotional responses over time.

EXTRA ROOMS

Introduce additional rooms with varied mechanics and interactions to assess a broader range of player skills and behaviors.